Ambient AI and agentic AI are the two halves of the healthcare automation stack — ambient captures the clinical encounter as structured documentation, agentic acts on that documentation to execute administrative workflows. The integration between them, what we call the baton pass, is where the encounter note becomes an automatic trigger for prior authorization, eligibility verification, and claim submission. That handoff is not yet standardized, and the gap is where 13 hours of staff time per physician disappears every week.

Table of Contents

- What is the difference between ambient AI and agentic AI in healthcare?

- Why is the baton pass between ambient and agentic AI still being dropped?

- How much does the broken handoff actually cost a practice?

- What does a working ambient-to-agentic integration look like?

- How do independent practices capture the baton-pass opportunity?

- Frequently Asked Questions

- Key Takeaways

What is the difference between ambient AI and agentic AI in healthcare?

Ambient AI listens during clinical encounters and converts speech into structured documentation. Agentic AI reads that documentation and executes the downstream administrative workflows — prior authorizations, eligibility checks, denial appeals, claim submissions. Ambient captures the encounter. Agentic acts on it. They are complementary layers, not competing categories.

The two layers developed on separate timelines. Ambient AI emerged out of scribing and dictation: platforms like DeliverHealth's InstaNote, Nuance DAX, and Abridge turn a physician's spoken encounter into a finalized note that becomes the source of truth for coding, claims, and follow-up. Agentic AI emerged out of workflow automation: systems that read structured inputs and execute multi-step tasks inside payer portals, EHRs, and clearinghouses. This is Agentman's domain.

How do the two layers differ in what they produce?

| Dimension | Ambient AI | Agentic AI |

|---|---|---|

| Primary input | Physician-patient conversation | Structured clinical signals (medications, procedures, referrals) |

| Primary output | Encounter note, problem list, coding hints | Completed prior auth packet, eligibility check, claim submission |

| Where it runs | Exam room, during the visit | Back office, after the note is finalized |

| Who reviews the output | Physician (signs the note) | Billing or clinical staff (approves the submission) |

| Time horizon | Minutes (the encounter) | Hours to days (the workflow lifecycle) |

| Representative vendors | Abridge, Nuance DAX, DeliverHealth InstaNote | Agentman, emerging agentic RCM platforms |

The baton is the encounter note. The handoff point is clinical event detection — the moment a medication, procedure, or referral appears in the finalized note, an agent should receive a structured signal and load the corresponding workflow. As Prasad Thammineni, CEO of Agentman, puts it: "Ambient AI captures the encounter, passes the baton to Agentman, and the prior auth is assembled by the time the physician exits the room."

Why is the baton pass between ambient and agentic AI still being dropped?

Ambient AI and agentic AI were built in parallel rather than in sequence, so neither side designed the standard handshake that converts a clinical event into an agent trigger. Today, a physician finishes dictating, the coding engine assigns CPT codes, and the prior authorization process restarts manually — staff open payer portals and re-enter the exact data the encounter note already contains.

The technical reason is that encounter notes are generated as finished artifacts, not as event streams. A note tells a human reader what happened. An agent needs to know which specific clinical events inside the note require action, which payer rules apply to each one, and which documentation elements satisfy those rules. That translation layer does not exist as a default.

Which clinical events actually need to trigger downstream work?

The encounter note contains several classes of signals that should automatically fan out to different agents:

- New medication prescriptions requiring formulary check and prior authorization (common for GLP-1s, biologics, specialty drugs)

- Imaging orders requiring payer pre-authorization (CT, MRI, PET)

- Procedures requiring pre-service approval (injections, ablations, surgical scheduling)

- Referrals requiring network verification, scheduling coordination, and records transfer

- Durable medical equipment orders requiring coverage verification and supplier selection

- Follow-up plans requiring appointment scheduling and patient outreach

Each of these is a distinct workflow with its own payer-specific rules, documentation requirements, and turnaround expectations. Without automated event detection, staff scan the note manually, identify the actionable items, look up coverage, and fill portal forms — every time.

How much does the broken handoff actually cost a practice?

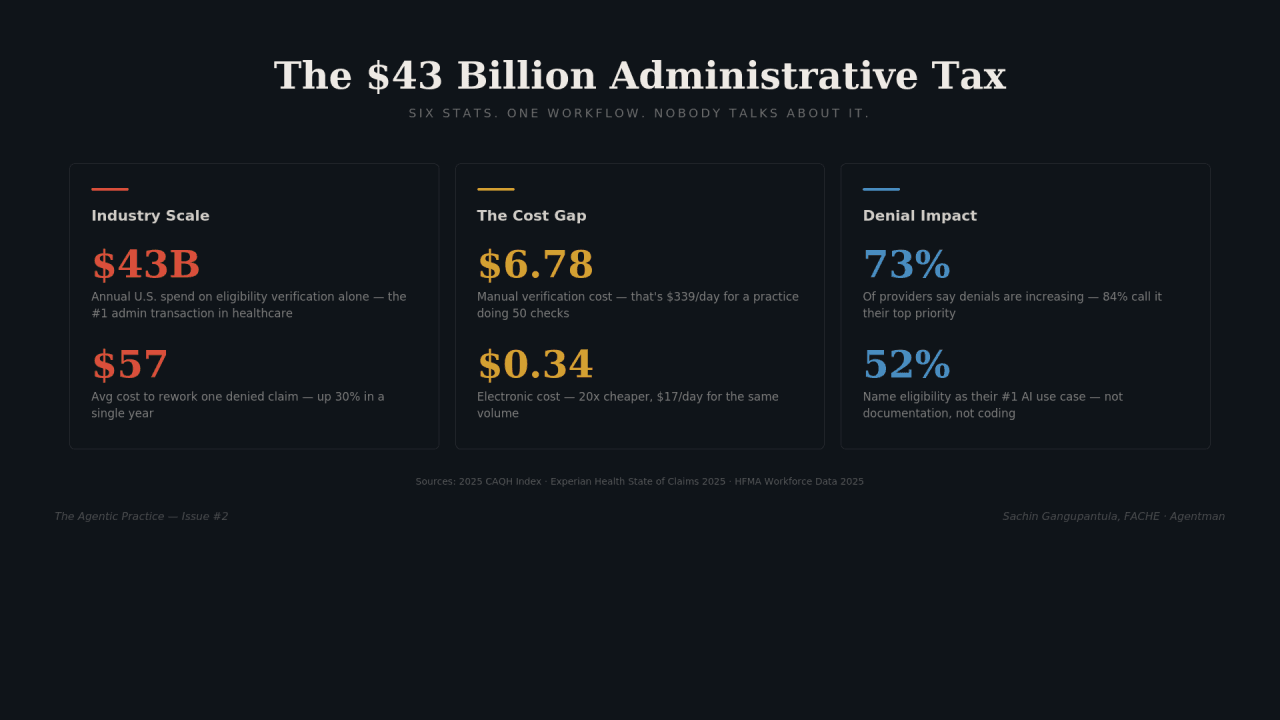

The quantified cost of the missing integration is measurable in staff hours, denial rates, and revenue leakage. The AMA's 2024 Prior Authorization Physician Survey — 1,000 practicing physicians — found that prior authorization alone consumes 13 hours of physician and staff time per physician per week, with practices completing an average of 39 prior auth requests per physician per week.

What do the numbers look like for a typical specialty practice?

| Metric | Value | Source |

|---|---|---|

| Prior auth requests per physician per week | 39 | AMA Prior Authorization Survey (2024) |

| Physician + staff hours per physician per week on PA | 13 | AMA Prior Authorization Survey (2024) |

| Physicians with staff working exclusively on PA | 40% | AMA Prior Authorization Survey (2024) |

| EHR and desk work hours per hour of direct patient care | ~2 | AMA / Annals of Internal Medicine time-motion study (2016) |

| Physicians reporting PA delays necessary care | 93% | AMA Prior Authorization Survey (2024) |

| Physicians reporting PA increases burnout | 89% | AMA Prior Authorization Survey (2024) |

For a five-physician independent specialty practice, those numbers compound into roughly 65 hours of staff time per week — more than 1.5 full-time equivalents — directed entirely at a workflow where the clinical information needed to complete it already exists in the encounter note. The 2023 JAMA Network Open follow-up to the original time-motion research confirmed the two-to-one ratio has held steady across specialties for nearly a decade.

Revenue leakage compounds the staffing cost. When prior auth requests are delayed or denied, treatments are postponed, patients abandon care (78% of physicians report this happening often or sometimes per the AMA 2024 survey), and the practice eats the cost of the unbillable clinical time already delivered. The handoff gap is not a convenience problem; it is a survival problem for independent practices operating on compressed margins.

What does a working ambient-to-agentic integration look like?

A working integration turns the finalized encounter note into a trigger event that fans out to the correct payer-specific agent, which assembles the submission from the note's clinical content and queues it for human review. The staff member opens a completed request packet, not a blank portal form.

How does the integration work mechanically?

The flow runs in five steps from the moment the physician signs the note:

- Note finalization — The ambient AI platform produces a structured encounter note, signed by the physician.

- Event extraction — An agent parses the note for actionable clinical events (medications, procedures, imaging, referrals) and classifies each by payer and service line.

- Skill routing — Each event is matched to a payer-specific skill: the Aetna GLP-1 skill encodes required lab values and step-therapy documentation; the Blue Shield imaging skill knows the evidence format for CT approvals; the Medicare DME skill knows face-to-face documentation requirements.

- Submission assembly — The skill pulls the required clinical evidence from the encounter note, combines it with patient demographic and coverage data from the EHR, and drafts a submission formatted for the target payer portal or API.

- Human review and approval — The billing or clinical staff member opens a completed submission, verifies accuracy, and approves. Only then does anything leave the practice.

Why is payer-specific intelligence the defensible layer?

The skill library behind the agents is the durable asset. Every submission outcome — approval, denial, request for additional information — refines the payer-specific intelligence for that skill. A new endocrinology practice onboarding inherits the GLP-1 authorization skills that similar practices have already refined in production, rather than starting from a blank payer playbook.

This is the opposite of the prevailing RPA approach, which scripts individual portal workflows and breaks every time a payer changes a form field. Skill-based agents encode the clinical and documentation logic separately from the portal interface, which means the same skill adapts as payer processes evolve. According to the 2024 CAQH Index on administrative simplification, the U.S. healthcare system could save $20 billion annually by fully transitioning to electronic administrative transactions — a pool of value the agentic layer is positioned to capture.

How do independent practices capture the baton-pass opportunity?

Independent practices capture the opportunity by pairing their ambient AI platform with an agentic layer that reads the same encounter note and executes the downstream workflows — not by replacing staff, but by changing what staff do. A billing coordinator who previously spent hours in payer portals reviews a far larger volume of submissions that agents have already prepared, for a significantly larger patient panel with faster turnaround.

What is the architecture that makes this safe for clinical operations?

Agents assemble. Humans approve. That principle is not a constraint layered on afterward; it is the architecture. Every output is a finished work product queued for review, never an autonomous submission. Staff confirm before anything is filed with a payer, which keeps the clinical and compliance accountability with the practice. The transformation is in the assembly work, not in the approval authority.

For independent practices, this architecture matters for three reasons:

- Liability stays with the practice, not the vendor — human approval before submission means every filed document has a named reviewer on staff.

- Denial patterns improve outcomes over time — every denial teaches the payer-specific skill what the approval pattern actually requires, compounding accuracy month over month.

- Staffing flexibility increases — the same billing team can absorb practice growth without proportional headcount increases, because the assembly work scales on the agent side.

The practices that move first on ambient-to-agentic integration are the ones that pair a capable documentation layer with an agentic execution layer built for their payer mix — not the ones waiting for a single vendor to deliver both sides.

Frequently Asked Questions

What is the difference between ambient AI and agentic AI in healthcare?

Ambient AI converts clinical encounters into structured documentation — the encounter note, problem list, and coding hints. Agentic AI receives signals from that documentation and executes downstream administrative workflows like prior authorizations, eligibility checks, denial appeals, and claim submissions. Ambient captures the encounter; agentic acts on it. They are complementary layers in the healthcare automation stack, not competing products.

Why does prior authorization still require manual work if the encounter note already contains the clinical information?

Because the integration between the documentation layer and the execution layer has not been standardized. Encounter notes are generated as finished artifacts for human readers, not as event streams for agents. Staff manually scan the note, identify actionable clinical events, look up payer coverage rules, and re-enter information into portal forms. The baton pass — converting a signed clinical event into an automated agent trigger — is the missing piece.

Are prior authorizations submitted automatically once the agent assembles them?

No. Agents prepare the submission and queue it for human review. A billing or clinical staff member verifies accuracy and approves before anything is filed with a payer. The practice retains full clinical and compliance accountability. What changes is not the approval authority but the assembly work — staff stop doing portal data entry and start doing final review at much higher volume.

How much time does a typical specialty practice spend on prior authorization today?

According to the 2024 AMA Prior Authorization Physician Survey of 1,000 practicing physicians, each physician's practice spends 13 hours per week on prior authorization and completes 39 PA requests per physician per week. Forty percent of physicians have staff working exclusively on prior authorizations. For a five-physician specialty practice, that is roughly 65 hours of staff time per week directed at a single administrative workflow.

Does agentic AI replace the ambient AI platform?

No. Agentic AI depends on ambient AI as its upstream input. The encounter note produced by the ambient platform is the trigger that activates the agentic workflows. Practices that have already deployed ambient AI have completed the hardest part of the integration — the structured clinical documentation — and agentic AI extends the value of that investment into the back-office operations where administrative time is lost.

Which payer-specific skills matter most for independent specialty practices?

The highest-volume skills depend on specialty, but common anchors include GLP-1 prior authorization (endocrinology, primary care), advanced imaging pre-authorization (orthopedics, oncology, neurology), biologics authorization (rheumatology, dermatology, GI), DME coverage verification (pulmonology, sleep, orthopedics), and specialty pharmacy step-therapy documentation. Skills are payer-specific because the rules and formats differ across Aetna, UnitedHealthcare, Blue Shield plans, and Medicare Advantage — and the same drug or procedure can require different evidence for different payers.

Key Takeaways

- Ambient AI and agentic AI are complementary layers — ambient captures the clinical encounter, agentic acts on it. Neither replaces the other.

- The baton pass is the integration point — converting a finalized encounter note into structured signals that trigger payer-specific agents is the standard that does not yet exist.

- The cost of the broken handoff is measurable — 13 hours of staff time per physician per week on prior auth alone, per the 2024 AMA survey.

- Working integrations follow a five-step flow — note finalization, event extraction, skill routing, submission assembly, human review. Agents never submit autonomously.

- Payer-specific skills are the defensible layer — skill libraries improve with every submission outcome and propagate across every practice on the platform.

- Independent practices capture the opportunity by pairing their ambient platform with an agentic execution layer built for their payer mix, not by waiting for a single vendor to deliver both.

If you are building the ambient layer, or if you run an independent practice already using ambient AI and want to close the back-office loop, talk to us.

Last Updated: April 20, 2026 · Next Review Due: July 19, 2026

Prasad Thammineni is co-founder and CEO of Agentman. Agentman builds the agentic layer for healthcare back-office operations, starting with independent specialty practices.